The API should guide you

We create APIs all the time - and I don’t have only libraries and frameworks in mind. Every piece of code that’s intended to be called by another piece of code is an API, in some sense. It’s our job to define an interface, which will be used to achieve whatever is expected.

While discussing various API designs, we often focus on “how it’s gonna look” first. Does it allow fluent calls? Does it rely on annotations? Is the naming accurate? Don’t get me wrong - these are all valid questions. Yet, these “visuals” often distract us from the actual usability.

The API should guide you. Well-designed API allows getting things done, in a way you expect it. Well-designed API prevents you from making bad choices. Finally, well-design API has the so-called “best practices” built-in.

Feels obvious? You’d be surprised how often we forget these things! Although we will focus on popular libraries here, all the rules described could be easily applied to our day-to-day code.

The principle of least surprise

Imagine a fairly straightforward task - calculating the sum of a stream of numbers. To make things a bit more complicated, let’s do it in parallel. With Java & Project Reactor this could look as follows (no previous experience needed):

Flux.range(1, 1000) // stream of 1000 numbers

.subscribeOn(Schedulers.boundedElastic()) // custom "thread pool"

.parallel(3) // 3 parallel forks

// expected to be executed in parallel...

.reduce(Integer::sum)

.single() // back to sequential

.block(); // await result (blocking)

So far so good. The code compiles and when run, it returns the correct value. Yet, it still runs sequentially! Is the parallel(...) broken? In some sense yes, it is. The answer becomes obvious when reading the Javadoc:

Note that to actually perform the work in parallel, you should call

ParallelFlux.runOn(Scheduler)afterward.

So, to behave as we expect, the code has to be modified like this:

// ...

.parallel(3) // 3 parallel forks

.runOn(Schedulers.parallel()) // required to run in parallel!

.reduce(Integer::sum)

// ...

Since passing the Scheduler via runOn(...) is required for parallel(...) to work as expected, why isn’t it a required parameter? Why do we have to remember about the second call every time we use it?

This is an example of violating the principle of least surprise:

A component of a system should behave in a way that most users will expect it to behave.

Well-designed API allows you to get things done, in the way you expect it. It reduces the number of possible mistakes and cares about the user experience. Simply, it avoids surprising its users.

Instead of documenting unusual behavior and things to be remembered, try to construct the API in a way that makes them obvious.

Not encouraging mistakes

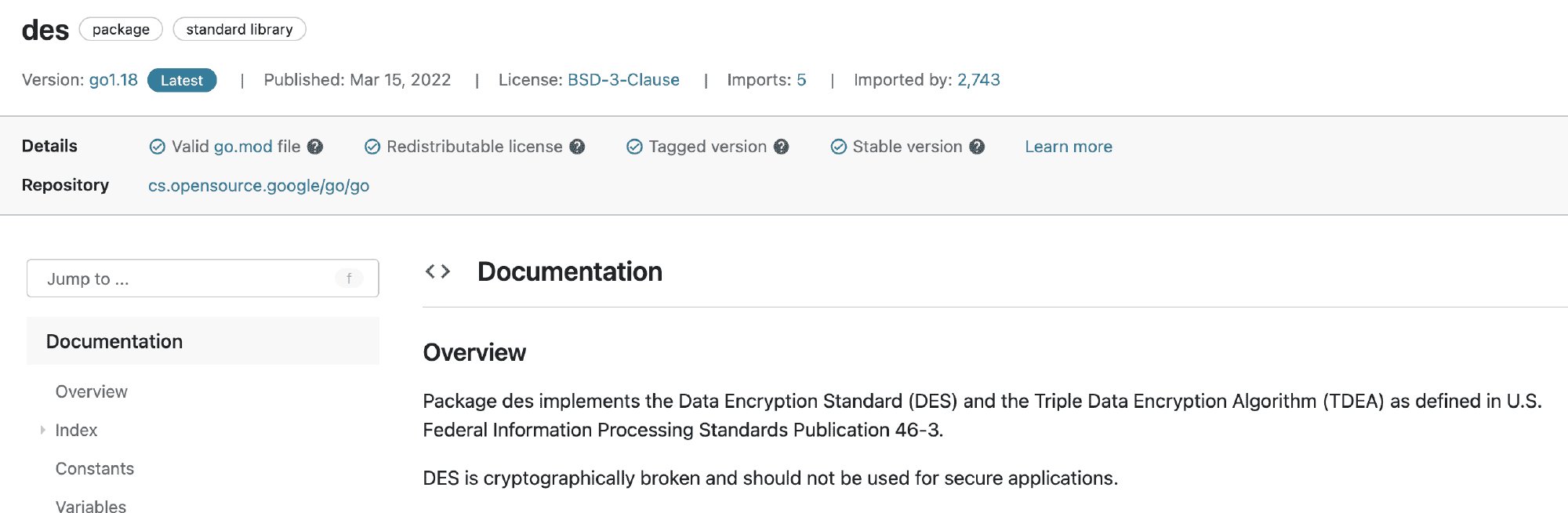

Certain APIs make me believe their intention is to encourage their users to make some really bad choices. My “favorite” examples are general-purpose cryptographic libraries. No matter if built-in in the language (like Go crypto package) or external ones (like Java BouncyCastle), the majority of could do a lot more to promote proper cryptography.

Let’s say we need to encrypt something. On a high-level, this task requires us to pick an algorithm and then provide it with a set of parameters (e.g. initialization vector, passphrase/key). It’s hard to imagine an API user, that deliberately wants to make its encryption weak from the security perspective. Yet, the libraries seem to “think” differently.

DES (Data Encryption Standard) has been developed in the early ’70s, when computers were less powerful than smartwatches are now. These days, we can often crack it in seconds. The improved version - 3DES (Triple DES) - dated to 1981(!), is not much better. Yet, both broken algorithms are still present in many crypto libraries, probably for backward compatibility reasons. What’s worse, there are tons of articles and StackOverflow answers suggesting they are still a way to go…

Well-designed API prevents you from making bad choices. In fact, it does even more and encourages you to do the right thing. And when you’re trying to do something bad, it warns you immediately.

In case of mentioned encryption algorithms, the first improvement proposal could be leaving a note in the API documentation. In fact, Go crypto package does this. Personally, I’d go one step further and make it explicit on the code level. Marking the whole API as deprecated or even adding a special prefix to the names like insecure would immediately “yell” at the person trying to use it. In an ideal world, DES/3DES should no longer be available.

Side note: there are positive cryptographic examples too. Libraries like libsodium or Google Tink aim for providing simple cryptography abstractions that are hard to misuse. Keep in mind, that overall security depends not only on the algorithm selected but also on the provided params. These APIs are doing their best to provide a more declarative way of doing so. What? instead of how?. That’s a huge topic on its own, but the bottom line is: prefer cryptographic libraries that will do the risky stuff for you.

Best practices built-in

If you’ve worked with HTTP clients before, you know setting timeouts is a reasonable thing to do. Tons of API documentation and blog posts are recommending doing so. Yet, every library takes a slightly different approach to promote their usage.

February 18, 2022Shouldn’t APIs encourage appropriate use (“best practices”) themselves rather than promote extensive googling? 🤔

Take HTTP clients for example. They rarely promote/enforce setting timeouts explicitly…February 18, 2022

Some clients like Java built-in HttpClient or Go HTTP client don’t set any timeouts by default. This means that if you won’t specify them explicitly, an HTTP call can hang forever. Consequences? Poor resiliency, cascading failures, and leaking resources (threads, connections, etc.). Trust me, you probably don’t want to go live with such settings.

Others, like Spring WebClient or OkHttp, also do not enforce setting timeouts directly but have more reasonable defaults (30 and 10 seconds respectively). That’s definitely better than nothing.

I still wonder why such tools avoid making timeouts a required part of their APIs. My guess is that adding more required parameters could be considered a bad design for some. Visuals vs usability - 1:0.

Well-design API has its “best practices” built in. It takes care of reasonable defaults, focusing on the typical use cases. Instead of giving excuses for describing usage recommendations, it makes them an integral part of the interface.

Summary

Exposing an API is a liability. Just like with any user-facing solution, it requires considering the usability aspects. Good API does not surprise its users but instead shows them how to make things properly. It’s constructed in a way, that reduces the possibility of a mistake. Instead of forcing users to google for “XYZ best practices”, it takes care of such aspects directly.

Of course, not every API requires similar care. Described aspects will be the most important for the publicly available one, like libraries. Still, aiming for guiding the user is usually the good thing - no matter where applied.